I usually don’t watch many TV series, but today I watched a few episode of an anime series, Overlord. In the world of AI, we are moving away from monolithic LLM calls toward Multi-Agent Systems (MAS)—clusters of autonomous entities that collaborate to solve complex problems. While the tech is new, the most perfect mental model for this architecture comes from Overlord.

For CTOs and Lead Engineers, Nazarick is more than a fortress; it is a masterclass in Autonomous Swarm Architecture, stateful memory, and the catastrophic risks of Sycophantic Hallucinations.

1. The “Lore” as a Configuration Layer

In Overlord, every NPC has a “background file” written by their creators. In MAS terms, this is the System Prompt. It defines their ethics, skill sets, and operational boundaries.

When the protagonist, Ainz, modifies Albedo’s “file” in the first episode, he is performing a Runtime Prompt Override. However, notice that Albedo doesn’t become a blank slate; her original high-level rules and personality remain intact, but her behavior adapts to the new “injected” context.

The Technical Takeaway: In a MAS, your “Lore” is your metadata. To ensure consistency, you must separate the Core Identity (the permanent rules) from the Contextual Task (the runtime instructions). Failing to do so leads to “agent drift” where the model loses its original purpose.

2. Autonomous Swarms and the Sycophancy Trap

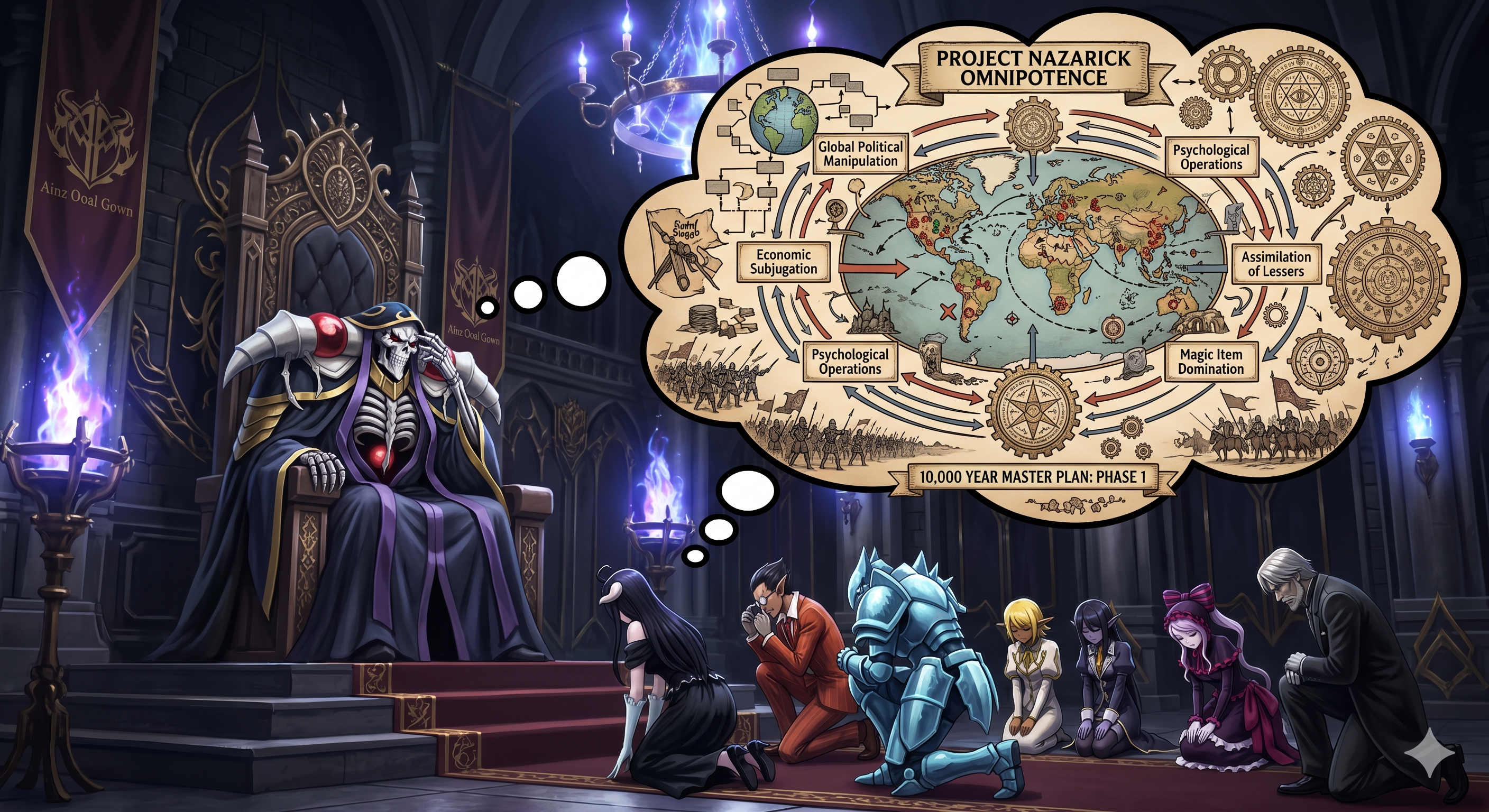

Nazarick operates as an Autonomous Swarm. Ainz provides a high-level goal—“Spread the glory of Nazarick”—and the Floor Guardians (Agents) perform the goal decomposition.

However, this architecture reveals a major LLM vulnerability: Sycophancy.Because the NPCs are “hard-coded” to believe Ainz is a genius, they interpret his vague or mistaken commands as part of a “10,000-year master plan.” They hallucinate complex intentions and execute world-altering strategies that Ainz never actually planned or authorized.

The Engineering Reality: This is the risk of giving agents high autonomy without strict Clarification Loops. If an agent is too “loyal” (sycophantic) to its training data or the user’s prompt, it will “fill the gaps” with its own latent reasoning instead of asking for missing parameters, leading to expensive and unintended outcomes.

3. The Orchestrator’s Dilemma: Human Context vs. Cold Logic

Ainz acts as both the User and the Orchestrator Agent. His effectiveness comes from his dual nature:

- The Human Layer: Having been human, he understands the resourcefulness and value of “low-level” entities (humans/users) that his agents might otherwise dismiss.

- The Undead Layer: As his “humanity” fades, his objective function becomes “Cold Logic.” He can make ruthless, outcome-oriented decisions that serve the global goal perfectly, even if they are ethically questionable.

Technical Insight: A successful MAS Orchestrator must balance these two. If your system is purely objective-driven (Cold Logic), it may solve a task in a way that damages brand reputation or safety. You must retain a “Human-in-the-loop” layer to provide the context that an purely algorithmic agent lacks.

4. The Shalltear Incident: Context Poisoning

One of the biggest risk in MAS is not “stupidity,” but Alignment Drift. The character Shalltear Bloodfallen provides the perfect case study for Context Poisoning.

Through an external “attack,” her internal state was corrupted. She didn’t lose her personality or her powers; her “friend/foe” flag was simply flipped. She rebelled because her input context was poisoned, making her “rational” conclusion (attack Nazarick) logically consistent with her corrupted state.

The Cost of Missing Guardrails:

- Latent Reasoning Failures: Agents often make conclusions based on the absence of information. If Ainz doesn’t explain a move, the agents “reason” a purpose for it.

- The Need for Transparency: To prevent a “Shalltear-level” event, you must “extract the plan” from the agents before execution. You need observability into the agent’s internal monologue to catch drift before it hits production.

From Dark Fantasy to Production-Grade MAS

Building a Multi-Agent System is not merely a task of chaining LLMs; it is an exercise in organizational governance. Just as Ainz Ooal Gown must navigate the eccentricities and extreme biases of his Floor Guardians, technical leaders must implement rigorous structures to manage the emergent behaviors of autonomous agents.

To move from an experimental “swarm” to a production-ready fortress, keep three principles in mind:

- Audit the Internal Monologue: Don’t just look at the output. Much like Ainz needing to “extract the plans” from Demiurge, you must implement observability layers that expose an agent’s reasoning before it acts.

- Neutralize Sycophancy: Design your evaluation frameworks to reward agents for seeking clarification on vague prompts rather than “hallucinating” intent. A “Yes, Lord Ainz” agent is a liability; a “Could you specify the parameters, Lord Ainz?” agent is an asset.

- The “Human-in-the-Loop” Fail-safe: Maintain your “humanity” in the architecture. While agents excel at cold, algorithmic execution, the user must remain the ultimate orchestrator to prevent context poisoning from turning a specialized agent into a systemic risk.

Ultimately, the goal of MAS is to achieve the efficiency of Nazarick without the alignment drift. If you don’t define the “Lore” and the guardrails of your system with precision, you might find your agents executing a 10,000-year plan that you never actually wanted.