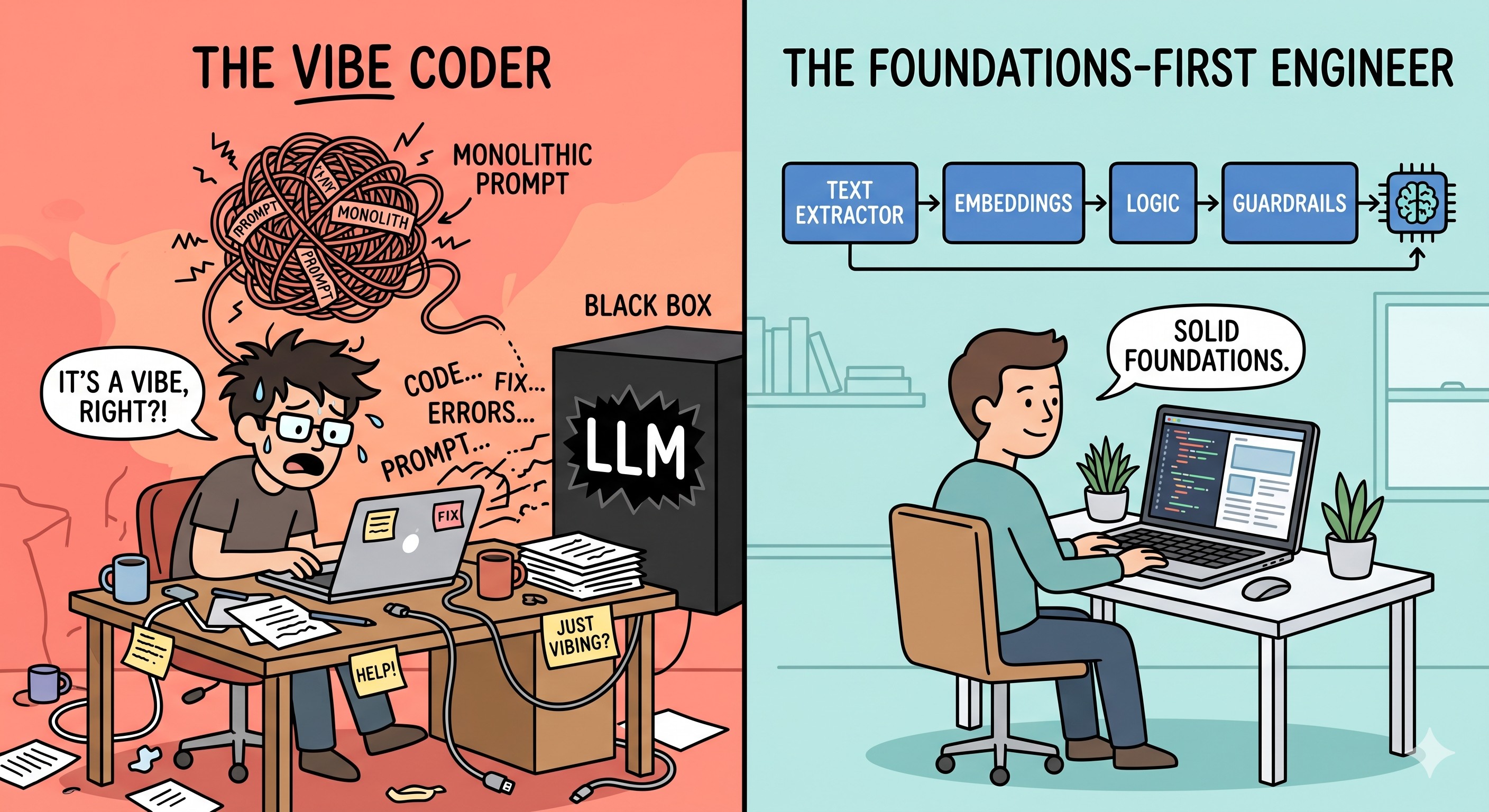

In a world where a new “State of the Art” (SOTA) model drops every Tuesday, the tech industry is currently flooded with “AI Experts” who have never looked under the hood. While “vibe coding”—relying on intuition and prompt engineering—can yield a quick prototype, it often fails the production stress test.

Building resilient AI systems requires moving beyond the hype and returning to the foundations: Linear Algebra, Calculus, and the probabilistic nature of Machine Learning.

1. Hallucinations are Probabilistic, Not Magical

Most developers treat LLM hallucinations as a mysterious “glitch.” However, when you understand the foundations, you realize that neural networks are essentially probabilistic machines. They are trained via Loss Functions and Gradient Descent to minimize the distance between the predicted and the actual output.

If a model is prompted with insufficient context, it will naturally return the most statistically likely sequence based on its training corpus—even if that sequence is factually incorrect. Understanding the relationship between the input vector and the probability distribution allows an engineer to diagnose whether a failure is a lack of “ground truth” context or an inherent bias in the training data.

2. Filtering the SOTA Noise

The temptation to jump to the newest, most expensive model is high. But a foundation-first architect applies a cold, ROI-driven filter:

- Cost vs. Performance: If a current model works with a robust evaluation harness and proper guardrails, doubling the inference cost for a 2% “vibe” improvement is poor engineering.

- Architecture > Frameworks: Many new frameworks are just layers of Markdown rules that add noise. A true specialist identifies whether a new release offers a genuine architectural advancement or just more “prompt-wrapping.”

3. Modular Pipelines vs. The Monolith

The hallmark of a “vibe-coded” project is a massive, single-file script where the LLM is expected to handle logic, formatting, and decision-making simultaneously. Professional AI engineering demands modularity.

This is how a “vibe coded” would look like

# The "Vibe-Coded" Monolith: Brittle and hard to debug

import openai

def process_everything(file_path):

# Mixing concerns: extraction, logic, and API calls in one go

with open(file_path, 'r') as f:

text = f.read()

# Massive prompt trying to do 5 things at once

prompt = f"Extract names, dates, and sentiment from this: {text}. Also, format it as JSON and tell me if the user is angry."

response = openai.ChatCompletion.create(model="gpt-4o", messages=[{"role": "user", "content": prompt}])

# Zero error handling or validation of the probabilistic output

return response.choices[0].message.contentBy building single-purpose libraries and orchestrating them into specialized pipelines, you create systems that are maintainable and scalable. Here is a how a modular pipeline would start looking like:

# Example: Modular AI Pipeline Architecture

class DocumentProcessor:

def extract_text(self, file_path):

# Specific logic for extraction

pass

class InformationExtractor:

def __init__(self, model_client):

self.client = model_client

def get_entities(self, text):

# Targeted extraction with specific schema

pass

# Orchestration over Monolith

def run_pipeline(path):

raw_text = DocumentProcessor().extract_text(path)

data = InformationExtractor(gemini_client).get_entities(raw_text)

return dataThe Bottom Line

A foundation-first architect applies a cold, ROI-driven filter to new releases. If a current model works with a robust evaluation harness and proper guardrails, doubling the inference cost for a new SOTA model just for “better vibes” is poor engineering.

Success in the AI era belongs to those who understand the technology, not just those who know how to use it. While getting my Master in AI at Universidad San Francisco de Quito (USFQ) provided me with the mathematical bedrock required to distinguish signal from noise, I came across a great resource: https://aiengineeringfromscratch.com/ it will get you there whether you are a beginner trying to get up to speed or a seasoned engineer wanting to review/reinforce some concepts.